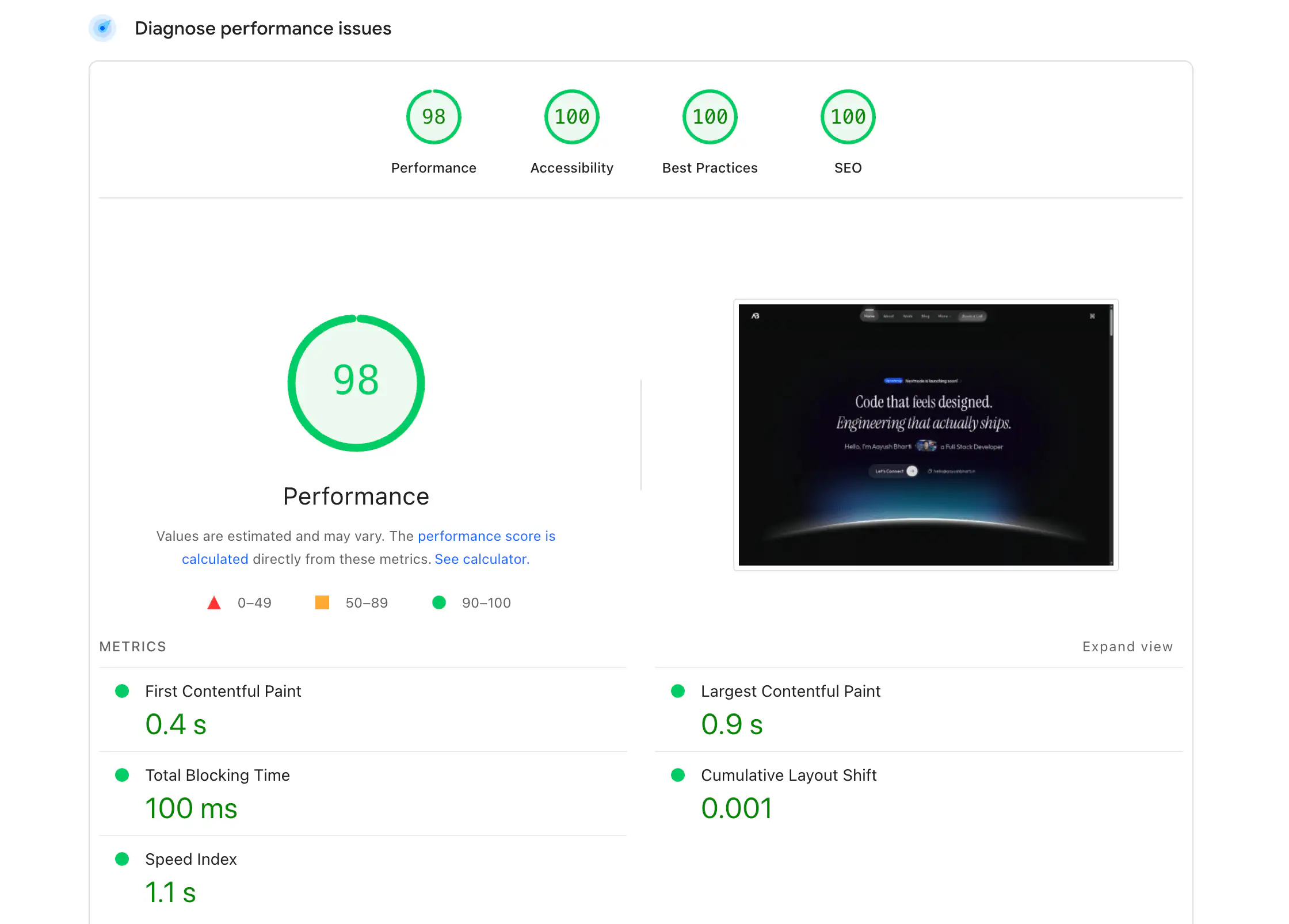

Your Next.js app scores a 54 on Lighthouse. You shipped it three months ago with a perfect 100, and now there's an analytics SDK, a cookie banner, two icon libraries you imported wrong, and a client component wrapping your entire layout because someone needed useState in the header. I've been there — more than once — and the fix is never one silver bullet. It's twenty small decisions compounding in the right direction.

This is every optimisation technique I've used across production Next.js apps, ordered by how quickly you'll see results. No fluff, no "it depends" without telling you what it depends on. Let's fix your score.

1. Bundle size — the one that surprises everyone

Before optimising anything, you need to know what you're shipping. Most Next.js apps are 2-3x larger than they need to be, and the culprit is almost never your code (I know, that hurts) — it's your dependencies.

1.1 Analyse first, cut second

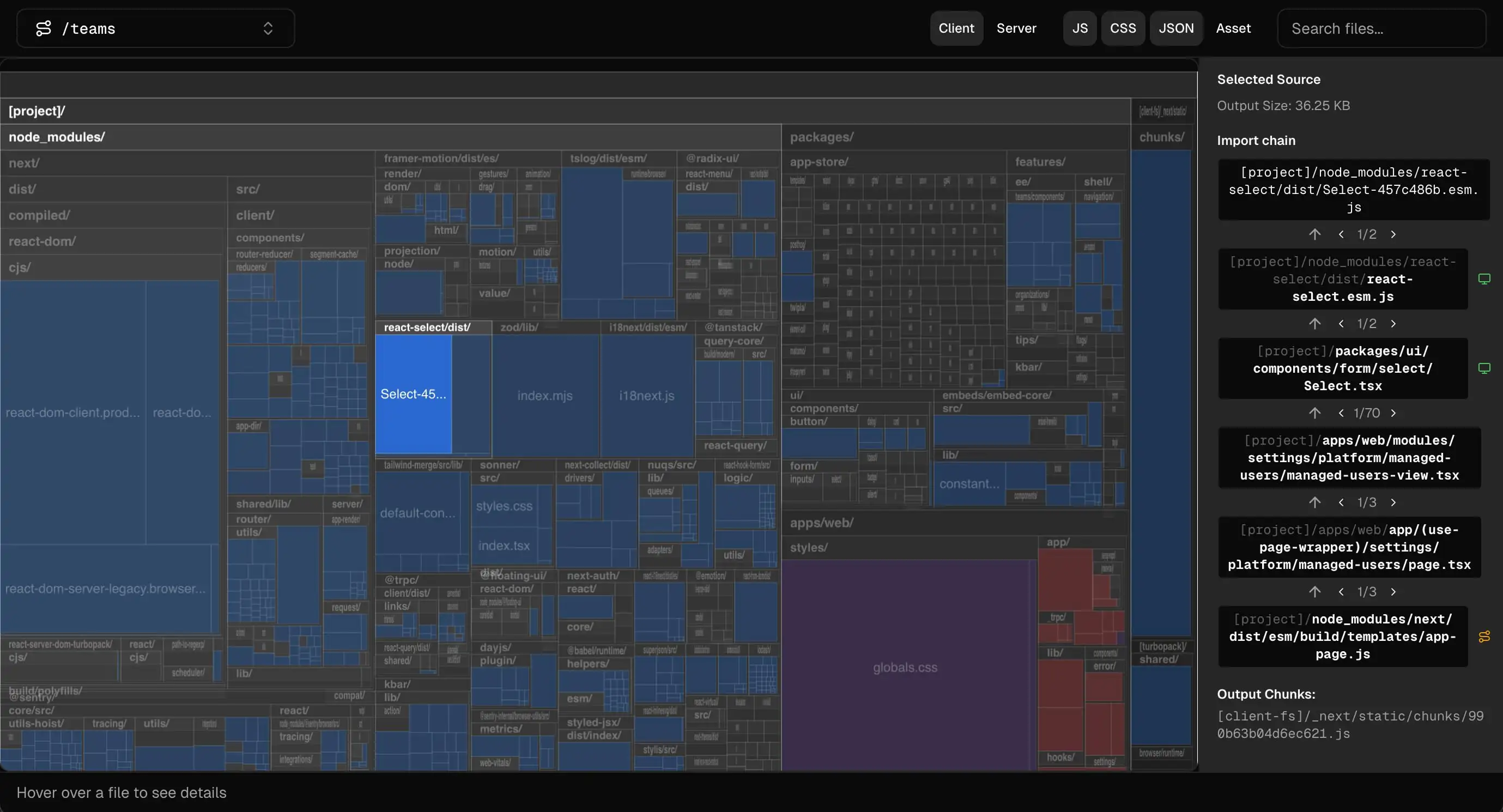

Run the built-in analyzer (Next.js 16.1+):

npx next experimental-analyze

You'll get a treemap showing exactly which packages eat the most space. Look for the usual suspects: moment.js (328KB — replace with date-fns or the native Intl API), full lodash imports, and icon libraries where you imported the entire set instead of individual icons.

1.2 The barrel export trap

Some packages export hundreds of modules from a single entry point — icon libraries, utility kits, component frameworks. You import one function and the bundler pulls in everything because it can't tree-shake inside node_modules.

Next.js has a fix for this. Add the package to optimizePackageImports and it rewrites your barrel imports to direct imports at build time — same developer experience, fraction of the bundle:

const nextConfig = {

experimental: {

optimizePackageImports: ["@phosphor-icons/react", "recharts"],

},

};Many popular libraries (lodash-es, date-fns, @mui/material, and others) are already optimised by default — check the list before adding them manually. I added two packages on this site and shaved ~180KB off the client bundle with zero code changes.

1.3 Server Components — stop shipping JS you don't need

Every component in App Router is a Server Component by default — it ships zero JS to the browser. The mistake I see most often: marking an entire page as "use client" because one small piece needs interactivity.

"use client"; // Ships the entire page as JS

export default function BlogPost({ post }) {

const [liked, setLiked] = useState(false); // State forces everything client-side

return (

<article>

<h1>{post.title}</h1>

<p>{post.content}</p> {/* Static content — no reason to ship as JS */}

<button onClick={() => setLiked(true)}>Like</button>

<LikeButton postId={post.id} /> {/* Only this tiny piece ships JS */}

</article>

);

}Push "use client" as deep into the component tree as possible. The boundary should wrap the smallest interactive surface — a button, a form, a search input — not a page, not a layout.

Passing a Server Component as children to a Client Component? It still runs on the server. This is how you compose interactive wrappers around static content without shipping the static content as JS.

- Replace

momentwithdate-fnsor nativeIntl.DateTimeFormat - Use specific imports for icon libraries, never

import * from - Audit with the bundle analyzer after every major dependency addition

- Target under 500KB total JS per page — 1500KB is the absolute ceiling

2. Core Web Vitals and optimising FCP/LCP

Google uses four Core Web Vitals to rank your site. Here's what they actually mean and what "good" looks like:

| Metric | What it measures | Good | Needs work | Poor |

|---|---|---|---|---|

| FCP (First Contentful Paint) | Time until first text/image appears | < 1.8s | 1.8 - 3.0s | > 3.0s |

| LCP (Largest Contentful Paint) | Time until the largest visible element renders | < 2.5s | 2.5 - 4.0s | > 4.0s |

| INP (Interaction to Next Paint) | Delay between user interaction and visual response | < 200ms | 200 - 500ms | > 500ms |

| CLS (Cumulative Layout Shift) | How much the page layout shifts unexpectedly | < 0.1 | 0.1 - 0.25 | > 0.25 |

INP replaced FID (First Input Delay) in March 2024 — if you're still reading articles that reference FID, they're outdated.

2.1 Measure before you optimise

Run PageSpeed Insights on your production URL — not localhost, not a preview deployment. That's what Google actually measures.

For real-user data, check the Chrome User Experience Report (CrUX) — this is what Google uses for search rankings. For continuous monitoring, add @vercel/speed-insights to your layout.

2.2 Images — the biggest LCP lever

next/image handles format conversion (WebP/AVIF), responsive sizing, and lazy loading automatically. Three things most people get wrong:

1. Mark the hero image as priority. Your LCP element is usually the largest above-the-fold image. By default, next/image lazy loads everything — the priority prop disables that and adds a <link rel="preload"> to the document head.

<Image

src="/hero.webp"

alt="Hero image"

width={1200}

height={630}

priority

/>2. Use blur placeholders. LQIP (Low Quality Image Placeholders) show a blurred preview instantly while the full image loads. Add placeholder="blur" with a blurDataURL.

3. Don't lazy-load above-the-fold images. If it's visible without scrolling, add priority or loading="eager".

2.3 Fonts — zero layout shift

next/font self-hosts fonts and eliminates external network requests. Use display: "swap" so text renders immediately with a fallback, and adjustFontFallback (enabled by default) calculates CSS overrides so the font swap causes zero CLS.

import { Inter } from "next/font/google";

const inter = Inter({ subsets: ["latin"], display: "swap" });

export default function RootLayout({ children }) {

return (

<html className={inter.className}>

<body>{children}</body>

</html>

);

}2.4 Defer third-party scripts

Analytics, chat widgets, cookie banners — they all want to load during your critical rendering path. Push them out with next/script:

import Script from "next/script";

<Script

src="https://analytics.example.com/script.js"

strategy="lazyOnload" // Loads after everything else

/>| Strategy | When it loads | Use for |

|---|---|---|

beforeInteractive | Before hydration | Critical A/B testing, bot detection |

afterInteractive | After some hydration (default) | Analytics, tag managers |

lazyOnload | After page is idle | Chat widgets, social embeds, cookie banners |

For Google services, use @next/third-parties — it loads GA, Maps, and YouTube embeds with optimised defaults out of the box.

Add preconnect hints for third-party origins — each one saves 100-500ms of DNS + TCP + TLS handshake time:

<link rel="preconnect" href="https://fonts.googleapis.com" />

<link rel="dns-prefetch" href="https://analytics.example.com" />3. Rendering strategies

Choosing the right rendering strategy has a direct impact on TTFB, FCP, and LCP.

| Strategy | How it works | TTFB | Use when |

|---|---|---|---|

| SSG | HTML generated at build time, served from CDN | Fastest | Landing pages, docs, blogs — content rarely changes |

| ISR | Static + revalidates at a fixed interval | Fast | Product listings, content that changes every few minutes/hours |

| SSR | HTML generated per request | Depends on backend | SEO-critical pages with real-time or personalised data |

| CSR | Renders entirely in browser | N/A | Dashboards, internal tools — SEO doesn't matter |

Start with SSG. Move to ISR if data needs freshness. Move to SSR only if data needs per-request accuracy. CSR is a last resort. If you're on Next.js 16+, look at Partial Prerendering (PPR) — it serves a static shell instantly and streams dynamic sections, combining the best of SSG and SSR in a single page.

4. Code splitting and dynamic imports

Next.js splits code at the route level automatically — each page only loads the JavaScript it needs. But heavy components within a page still land in that page's bundle unless you split them manually (the bundler is helpful, not psychic).

Use next/dynamic for components that are heavy, below the fold, or client-only:

"use client"; // ssr: false only works in Client Components

import dynamic from "next/dynamic";

const Chart = dynamic(() => import("@/components/chart"), {

ssr: false, // Skip server render — this uses browser APIs

loading: () => <div className="h-64 animate-pulse bg-neutral-100" />,

});Use dynamic imports for: heavy client libraries (charts, editors), browser-only APIs (window, document), below-the-fold content most users never scroll to.

Don't use them for: small shared components, above-the-fold UI, layout components. Every dynamic import creates a separate network request — splitting ten small components into ten chunks is worse than one bundle.

5. Data fetching and caching

Slow data fetching is the quiet one that bites you. Your rendering strategy doesn't matter if you're waterfalling three sequential API calls before the page can render.

5.1 Parallel data fetching

The most common mistake: sequential awaits when the calls don't depend on each other.

const user = await getUser(); // 200ms

const posts = await getPosts(); // 300ms

const comments = await getComments(); // 150ms

// Total: 650ms — each waits for the previous oneconst [user, posts, comments] = await Promise.all([

getUser(), // 200ms ─┐

getPosts(), // 300ms ─┤ All start simultaneously

getComments(), // 150ms ─┘

]);

// Total: 300ms — limited by the slowest call54% faster from one line. No library, no config — Promise.all and done.

5.2 Request deduplication with cache()

React's cache() deduplicates identical requests within a single render pass. If three components all call getUser(), it executes once.

import { cache } from "react";

export const getUser = cache(async (id: string) => {

const res = await fetch(`/api/users/${id}`);

return res.json();

});5.3 The "use cache" directive

This is the big one that almost no blog covers yet. "use cache" is a declarative caching directive — first introduced experimentally in Next.js 15 and enabled via Cache Components in Next.js 16. It replaces the old fetch() cache options and unstable_cache.

First, enable it in your config:

const nextConfig = {

cacheComponents: true,

};Then use it at the page level, layout level, or individual functions:

"use cache";

import { cacheLife } from "next/cache";

export default async function BlogPage() {

cacheLife("hours"); // Cache this page's output for hours

const posts = await getAllPosts();

return <PostList posts={posts} />;

}The built-in cache profiles:

| Profile | Stale | Revalidate | Expire |

|---|---|---|---|

"default" | 5min | 15min | never |

"seconds" | 30s | 1s | 1min |

"minutes" | 5min | 1min | 1hr |

"hours" | 5min | 1hr | 1 day |

"days" | 5min | 1 day | 1 week |

"weeks" | 5min | 1 week | 30 days |

"max" | 5min | 30 days | 1 year |

If you don't call cacheLife() at all, the default profile is used. For on-demand revalidation, pair it with cacheTag() and call revalidateTag() from an API route or Server Action. This replaces the old route segment configs (revalidate, dynamic, fetchCache) — don't mix both models.

6. Streaming and Suspense

Traditional SSR waits for the slowest data source before sending anything. If your content loads in 100ms but comments take 2 seconds, the user stares at a blank screen for 2 seconds.

Streaming fixes this — the server sends fast parts immediately and streams slow parts as they resolve:

import { Suspense } from "react";

export default async function BlogPost({ params }) {

const { slug } = await params;

const post = await getPost(slug); // Fast — 50ms

return (

<article>

<h1>{post.title}</h1>

<p>{post.content}</p>

<Suspense fallback={<CommentsSkeleton />}>

<Comments slug={slug} /> {/* Streams in when ready — 800ms */}

</Suspense>

</article>

);

}Place Suspense boundaries around non-critical data fetchers, below-the-fold sections, and personalised content. Don't wrap above-the-fold content — a loading flash there hurts perceived performance more than it helps.

For page-level streaming, you can also use a loading.tsx file — Next.js wraps the page in a Suspense boundary for you automatically:

export default function Loading() {

return <DashboardSkeleton />;

}This is the simplest way to get streaming — one file, zero Suspense imports, instant loading states for entire route segments.

7. React Compiler

Here's a technique zero performance articles talk about (I checked): stop memoising things manually.

React Compiler analyses your components at build time and automatically inserts useMemo, useCallback, and React.memo where they'll actually help — not where you think they'll help, but where static analysis proves it.

First, install the Babel plugin:

pnpm add -D babel-plugin-react-compilerThen enable it in your config — note this is a top-level option, not inside experimental:

const nextConfig = {

reactCompiler: true,

};In most cases, you can remove your manual useMemo/useCallback/React.memo calls — the compiler analyses the actual dependency graph at build time instead of relying on you listing deps correctly in an array. If a specific component needs to opt out, use the "use no memo" directive. Fewer unnecessary re-renders means better INP.

8. next.config power flags

These are the flags I run in production that most developers don't know exist. (Free performance. No code changes. You're welcome.)

8.1 inlineCss

Inlines CSS directly into HTML instead of serving it as separate files. Eliminates render-blocking CSS requests.

const nextConfig = {

experimental: {

inlineCss: true,

},

};One fewer network round-trip per page load. Only works in production builds. The tradeoff: inlined CSS can't be cached separately, so returning visitors re-download styles — best for first-visit-heavy sites like landing pages and blogs.

8.2 staleTimes

Controls how long the client-side router caches visited pages. By default, dynamic pages are cached for 0 seconds (re-fetched on every navigation) and static pages for 5 minutes.

const nextConfig = {

experimental: {

staleTimes: {

dynamic: 30, // Cache dynamic pages for 30s on client

static: 180, // Cache static pages for 3 min on client

},

},

};This means navigating back to a previously visited page is instant for 30 seconds instead of triggering a new server request. Big win for apps with frequent back-and-forth navigation.

8.3 serverExternalPackages

Some Node.js packages break when bundled for Server Components — native bindings, packages that use __dirname, or packages with side effects during import. This flag tells Next.js to skip bundling and use native require().

const nextConfig = {

serverExternalPackages: ["puppeteer", "canvas"],

};Many common packages (sharp, bcrypt, prisma, and others) are already excluded by default — you only need this for packages not on the automatic opt-out list.

8.4 removeConsole

Strip console.log statements from production builds. Less noise, slightly smaller bundles.

const nextConfig = {

compiler: {

removeConsole: {

exclude: ["error"], // Keep console.error for debugging

},

},

};9. Production checklist

Before you ship, run through this. I've ordered by impact — fix the high-priority items first.

9.1 High Priority

9.2 Medium Priority

9.3 Low Priority

Here's the thing nobody tells you about performance work: hitting a 100 on Lighthouse is easy. Staying there is the actual job.

Every feature you ship, every dependency you add, every "just this one client component" — they're all small withdrawals from a budget your users never agreed to. The sites that stay fast aren't the ones that optimised once. They're the ones that made performance a constraint, not a cleanup task.

Add @vercel/speed-insights to your layout. Set a bundle budget in CI. Make the number visible to your team every week. When someone asks "can we add this 200KB carousel library?" — the dashboard answers for you.

The best Lighthouse score is the one you never have to fix twice.